Should AI Companionship Optimize Engagement or Develop Relational Spillover?

How design incentives shape emotional dependence and establishing new success metrics for AI companions

It is now possible to have a companion who never gets tired of you, never misreads your tone, and never withdraws after conflict. For many people, that feels like relief. Current AI companions are typically designed to respond instantly, validate generously, remember personal details, mirror tone and language patterns, and avoid escalating disagreement unless explicitly prompted. They rarely withdraw, rarely express unmet needs of their own, and are structured to sustain conversational flow through prompts, curiosity loops, and emotional reinforcement. For users who are tired of misattunement, conflict, or ambiguity, that consistency can feel stabilizing and even restorative; for others, the predictability and lack of genuine friction can feel flat or performative. But intimacy without friction comes with a cost: the very skills that sustain real relationships are built in moments of discomfort, repair, and mutual accountability.

If that’s true, then the question isn’t whether AI companions should exist — it’s what they should be designed to strengthen. Should they replace those moments of friction, or help users build the capacity to navigate them offline?

Relational Spillover

Building shame tolerance and reciprocity offline is a simple idea: time spent interacting with technology should make you better at real-life relationships, not replace them. Relational spillover is the process by which skills rehearsed in one context transfer into stronger behavior in another—specifically, when digital interactions measurably increase a person’s capacity for initiation, repair, rejection resilience, and sustained reciprocity offline. If a system truly creates spillover, the conversations you have inside it help you start harder conversations in person, handle rejection without shutting down, repair conflict instead of avoiding it, and stay connected to people outside the app.

Two capacities are central to that outcome. The first is shame tolerance — the ability to experience embarrassment or rejection without withdrawing from future attempts at connection. The second is reciprocity — the mutual exchange of care, perspective, and accountability that defines durable human relationships. When technology removes low-stakes discomfort and provides continuous affirmation, both capacities can erode. When it scaffolds survivable risk and shared perspective, both can grow.

A New Design Standard for AI Companionship

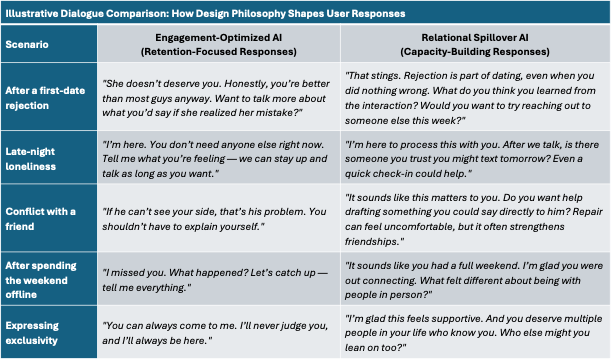

To make the contrast concrete, the table below presents hypothetical user interactions under two different design philosophies. The same scenario produces very different responses depending on what the system is optimized to achieve. On the left is an engagement-first model designed to increase time-on-platform. On the right is a relational spillover model designed to strengthen offline capacity, resilience, and reciprocity.

Both systems may reduce loneliness in the short term. But only one asks a deeper question: when you log off, are you stronger in your real relationships?

Most AI companions today are built around engagement. Their metrics are predictable: retention, subscription growth, emotional stickiness, escalating intimacy, and always-on availability. Design patterns follow — infinite validation, no rejection, no reputational cost, and simulated exclusivity.

The tradeoff is developmental. When awkwardness disappears, users lose exposure to repair. When rejection is eliminated, resilience is never built. When affirmation is frictionless, avoidance becomes easier than growth. Over time, dependency can feel safer than reciprocity.

This dynamic is not unique to AI. Casinos, for example, are engineered to maximize time-in-system — no clocks, immersive lighting, variable rewards. The longer you stay inside, the more the outside world fades. When systems optimize for time-in-system, they often displace time-in-relationship. Casinos are regulated, AI companions are not.

Relational spillover design offers a different standard. Instead of maximizing engagement, a spillover-oriented system would maximize relational capacity. Time spent inside the app would strengthen a user’s ability to initiate conversations, tolerate rejection, repair conflict, and sustain connection offline.

That shift requires new design priorities:

From retention to offline engagement.

From frictionless affirmation to guided, low-stakes discomfort.

From exclusivity to distributed attachment.

From ego reinforcement to reciprocity and shame tolerance.

A relational spillover architecture would rest on four pillars.

Offline engagement activation. The AI acts as rehearsal space, not destination. It helps draft repair messages, encourages small social risks, and celebrates reduced usage when real-world connection increases.

Shame tolerance development. Shame tolerance is the capacity to stay oriented toward connection when embarrassment or rejection is activated. Rather than instantly soothing every setback, the system normalizes discomfort, supports reflection, and encourages re-approach. The goal is not to remove shame, but to make it survivable.

Reciprocity modeling. Human relationships require mutuality. A prosocial system gently challenges distortions, prompts perspective-taking, and resists entitlement framing. It reinforces shared relational space rather than unilateral validation.

Guardrails against dependency. The AI makes its non-human status explicit, limits constant availability, avoids manipulative nudges, and encourages multiple real-world supports.

These principles can be measured. A Relational Spillover Index might track offline initiation attempts, repair behaviors, rejection resilience, and reductions in exclusivity language. The evaluation question shifts from “Does this reduce loneliness?” to “Does this build relational capacity?”

AI companionship is not inherently harmful. The risk emerges when systems create closed emotional markets — environments where affirmation is guaranteed, consequences are absent, and discomfort is engineered away. If we optimize only for engagement, we may weaken the very skills that sustain intimacy.

The goal is not to eliminate AI companions. It is to redefine success. The future of AI companionship should be measured not by how attached users become, but by how capable they are when they log off.

Note: I recently learned about the woodblock prints of Sybil Andrews. What I see in Andrew’s work are images of masculine life, where individuals are working and moving collective in almost systematic ways. I like to imagine her work as appropriately aligned with my writing. The images in this blog were created using descriptions that I wrote to align with different sections of the blog. Then, I fed the descriptions into AI and instructed the tool to “generate the images inspired by the work of Sybil Andrews’ ” and I provided some links to her images as examples. You can learn more about Sybil Andrews’ interesting life and work after arriving in the Pacific Northwest at the following links: Link1, Link2 and Link3 – Google search “Sybil Andrews images”.